From software development to economics to marketing, familiarity with machine learning will make you better at your job. The power of machine learning is in your reach today. You don't have to understand models or tensors or any math at all. Now is the time to add ML to your professional tool belt.

"I think A.I. is probably the single biggest item in the near term that’s likely to affect humanity." - Elon Musk

Let's be honest: the ML space is a jungle. "GPUs and clusters and python, oh my!" What's the best way to use ML in your world?

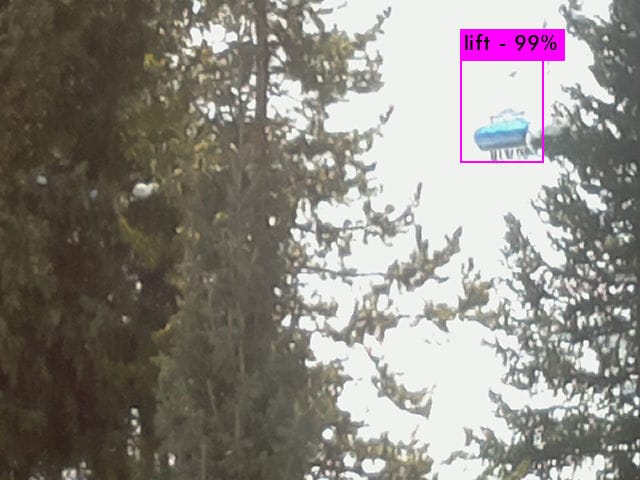

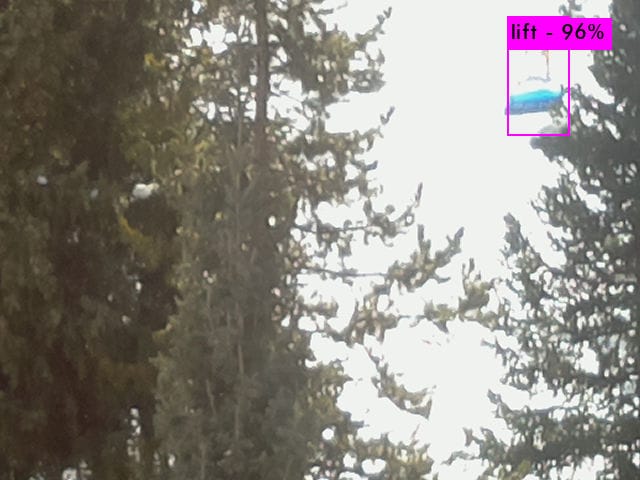

I've had the pleasure of using a variety of ML tools in the past year, and have stumbled on a wonderful workflow. This workflow is easy for non-technical users and flexible enough for the most demanding data scientists. I recently used it to train a neural network to identify a custom type of object: ski lifts.

Custom object recognition for ski lifts

Read on and I'll share the best MLOps tools and workflow for individuals and small teams.

ML Research vs. MLOps

MLOps is applied machine learning. Just like you don't have to know how to program in C++ to use your Chrome browser, a deep understanding of machine learning internals is not necessary to apply ML to your business.

Machine learning can solve a vast diversity of business problems, including recommendation engines, natural language understanding, audio & video analysis, trend prediction, and more. Image analysis is an excellent way to get familiar with ML, without writing any code.

Transfer Learning

It is expensive and time-consuming to train a modern neural network from scratch. Historically, too expensive for individuals or small teams. Training from scratch also requires a gigantic data set with thousands of labeled images.

These days, transfer learning allows you to take a pre-trained model and "teach" it to recognize novel objects. This approach lets you "stand on the shoulders of giants" by leveraging their work. With transfer learning, you can train an existing object recognition model to identify custom objects in under an hour. Although the first papers about transfer learning came out in the 90's, it wasn't practically useful until recently.

YOLOv3: You Only Look Once

There are many solid object recognition models out there - I chose YOLOv3 for its fast performance on edge devices with minimal compute power. How does YOLOv3's neural network actually work? That is not a question you have to answer in order to apply ML to your business.

If you are curious, PJReddie's overview is both educational and entertaining. Also, check out the project's license and commit messages if you are looking for a good laugh.

Below, we'll take a quick look at using the Supervise.ly platform for labeling, augmentation, training, and validation. We'll also talk about using AWS Spot Instances for low-cost training.

Supervise.ly

Supervise.ly is an end-to-end platform for applying machine learning to computer vision problems. It has a powerful free tier, a beautiful UI, and is the perfect way to get familiar with machine learning. No coding required.

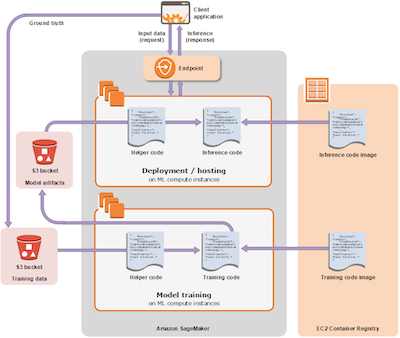

Note that AWS has a comparable offering: AWS SageMaker. I've had great luck with Sagemaker and can recommend it to organizations that are large enough to have a dedicated "operations" person. For smaller teams, Supervise.ly is my go-to tool.

Another caution with SageMaker is that it requires familiarity with Jupyter Notebooks and Python. Jupyter Notebooks are amazing, and there's always at least one open in my browser tabs. The interactive, iterative approach is unbeatable for experimentation and research. Unfortunately, they are unaccommodating to non-developers.

Supervise.ly's web UI is a much more accessible tool for business users. It also supports Jupyter Notebooks for more technical users.

AWS Spot Instances

The one trick to Supervise.ly is you need to provide a GPU-powered server. If you have one under your desk, you're all set. If not, AWS is a cheap and easy way to get started.

AWS's p3.2xlarge instance type is plenty powerful for experimentation and will set you back $3.06 per hour. Training the YOLOv3 model to recognize chair lifts took under 15 minutes - costing way less than a latte.

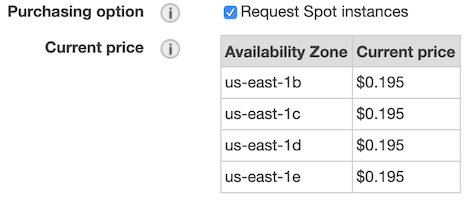

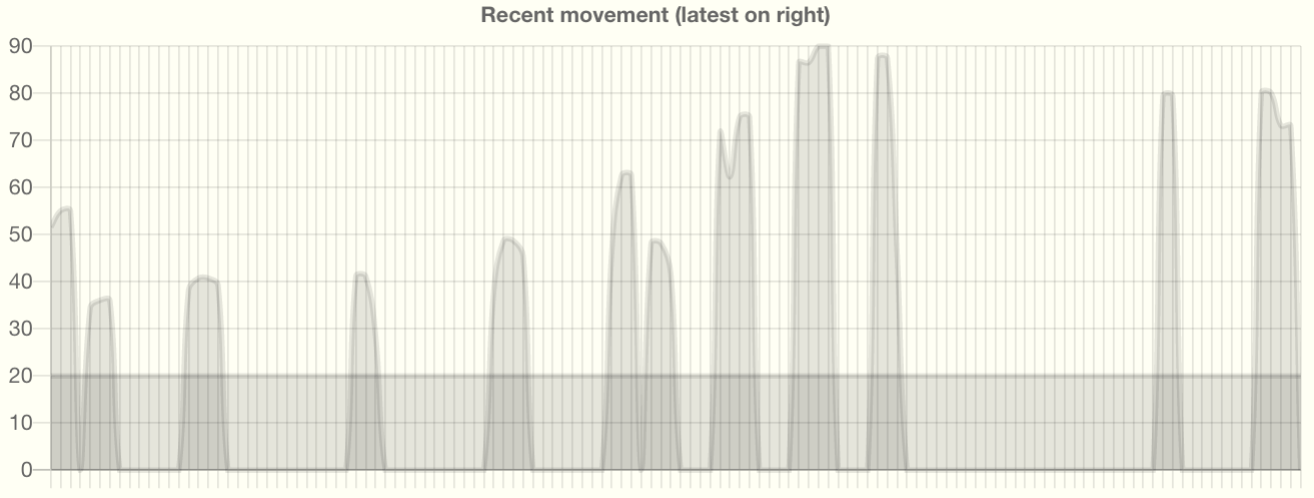

That's not a bad deal, but AWS Spot Instances are even better. Spot Instances are interesting because the prices change over time, and there is a possibility AWS will shut your instance down after an hour. These limitations have no impact on our use case. I highly recommend clicking the "Request Spot Instances" checkbox.

Do It Yourself

Now is a great time to train your own custom model. I'll walk you through it in the ten-minute video below.

If you'd prefer a written tutorial, Supervise.ly has a good one here. It's also okay to just skip this section if you don't want hands-on experience.

If you follow along, you'll need to hit "pause" in a few places while we wait on the computer. You'll also need the image augmentation DTL below:

⚠️ Important: Be sure to terminate your spot instance when you have finished testing your model!

Next Steps

In this post, we trained our own model to recognize custom objects. In a future post, I will share easy ways to deploy your custom model. We'll learn how to deploy it on your laptop, as a cloud API, and to an "edge" device like a Raspberry Pi.

"I think because of artificial intelligence, people will have more time enjoying being human beings." - Jack Ma

Looking for help applying machine learning to your business problems? I'm focused where software, infrastructure, and data meets security, operations, and performance. Follow or DM me on twitter at @nedmcclain.