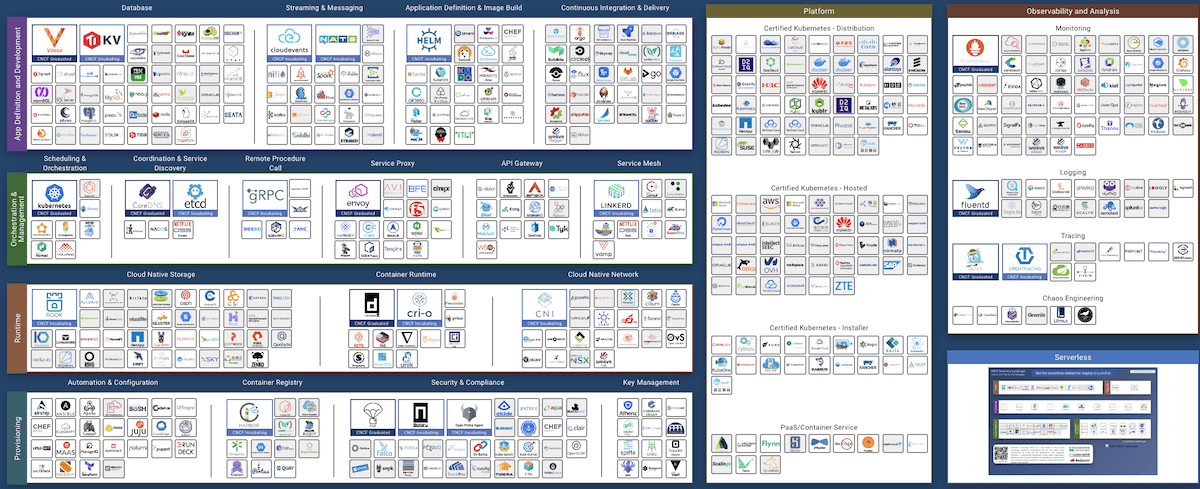

Some cloud native tools are only appropriate for “cloud native” startups. But look at the main goals of cloud native software, and you’ll see they are tightly aligned with enterprise digital transformation:

Operability, Observability, Elasticity, Resilience, and Agility.

This post identifies the mature cloud native tools that every enterprise should consider in 2020. The tools highlighted below are robust, proven, and hardened for enterprise use today. These are not just incremental improvements on existing IT tools; they are enabling technologies that cut costs, improve security, and foster a stronger DevOps culture.

Infrastructure as Code

If your ops team is still SSH’ing or RDP’ing into servers to make changes, Infrastructure as Code (IaC) should be your highest priority. Capturing your server, OS, and app configurations “as code” means you can track changes in a version control system like Git.

Although IaC feels a little odd to traditional sysadmins at first, it yields a ton of benefits. Everyone can see what changes were made, when, and by who — at all layers of the IT stack. It’s easy to detect configuration drift, and you can prepare for a security audit in no time.

Even though Chef and Puppet are the most popular tools, I usually recommend Ansible and Terraform to my enterprise clients. They are cross-platform (and cross-cloud) and are the most accessible IaC tools to understand, teach, and share — making them the most DevOps-friendly. Packer is worth investigating for anyone making their own VM or cloud server images.

Service Discovery

The vast majority of enterprises I’ve seen use DNS for “service discovery” — although sadly, hardcoded IP addresses are also common. Experienced IT admins can share plenty of war stories about DNS caching and propagation times. When you are targeting more than “three nines” of availability, traditional DNS servers aren’t sufficient for High Availability failover.

There are dozens of Service Discovery tools on the cloud native landscape — and many of them are not so useful in the enterprise datacenter. Still, a few gems exist that can empower enterprise IT. They have service health checks, automated failover, work with existing enterprise applications, and expose API-based management. Deploying service discovery usually means that legacy hardware load balancers can be decommissioned.

For enterprises looking to take advantage of Service Discovery, I usually recommend one of three approaches:

- Start with DNS: Use a Service Discovery platform that supports DNS. Consul, etcd, and CoreDNS expose their service discovery data through both DNS and a simple API. This approach allows legacy applications to route traffic in a flexible way, without load balancers or code changes.

- Grow to a Service Proxy: Use a proxy to present your services. This architecture has most of the features of cutting-edge service discovery solutions, without a lot of new technology to understand.

The cloud native world has brought us dozens of great options — I’ve found that enterprise IT shops get a lot of ROI out of traditional proxies like Apache and Nginx. They are familiar, quick to deploy, and plenty powerful.

If you are looking for more modern features, Envoy, Kong, and Tyk are worth exploring. - Consider Mesh Networking: If your enterprise is dealing with “hybrid cloud,” a service mesh network could be your savior. Building on pure Service Discovery and Service Proxy tools, mesh solutions provide a secure, transparent network across data centers and clouds.

Some of the most popular tools in this space are tightly bound to Kubernetes, making them difficult to use with legacy applications. Others work well outside Kubernetes and are easily accessible to traditional IT. For enterprises who aren’t full-in on Kubernetes, I recommend Consul, Flannel, or Weavenet (shout out WeaveWorks Denver office!).

Zero Trust Security

The past few decades have witnessed an evolution in remote access security models. In the early days, dial-up connections and leased lines were considered “secured” by the magic of Ma Bell.

More recently, security depended on a firewalled network perimeter and remote VPN access. This model is fondly known as “Tootsie Pop” security — hard on the outside and chewy in the middle. [Hat tip to Trent R. Hein for this!]

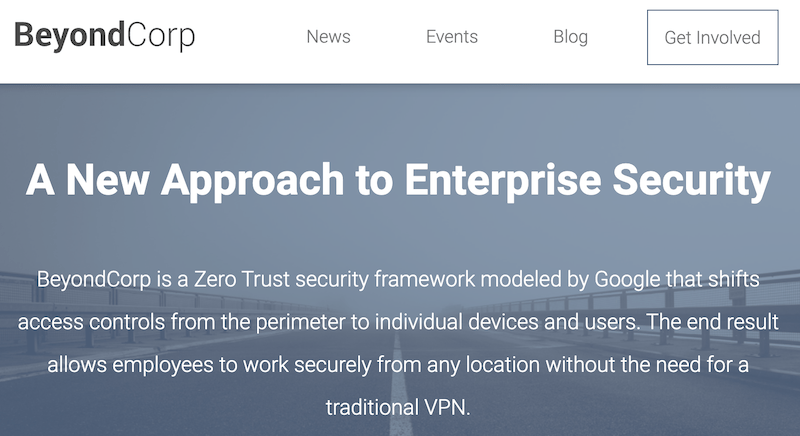

Today, the preferred practice is “Zero Trust Security.” Equally applicable to startups and Fortune 500s, this approach securely authenticates access to individual services, regardless of where on the network the user resides. At a high level, Zero Trust security harkens back to the failed attempts at enterprise “Single Sign On” Portals that Oracle and CA hawked in the ’90s.

Zero Trust is more secure than a VPN model — it is also much more user-friendly. Google helped raise the Zero Trust security model to prominence with its BeyondCorp initiative.

Google’s Cloud Identity and Access Management service is an easy way to get started with the BeyondCorp model. Alternatively, my go-to open source tool in this space is oauth2_proxy, which “just works.”

For SSH-centric shops, Gravitational’s Teleport is spectacular. Sharing and recording SSH sessions benefits everything from knowledge-transfer to incident response to compliance.

Citrix remains the go-to solution for Windows-centric shops where a majority of applications are NOT web-based.

Observability & Monitoring

There’s an ongoing debate in the cloud native community about what “observability” even means. Maybe it’s a three-legged stool, or maybe it’s a high-cardinality event analysis tool? Without going down that rat-hole, I think there are a few opportunities for enterprises to leverage progress in this space:

- Monitoring and metrics collection has come a long way since Nagios was first incarnated in 1999. Cloud native monitoring platforms have excellent performance, robust alerting/notification systems, beautiful dashboards, and work well with dynamic resources like cloud servers and VMs. Prometheus is the de-facto cloud native monitoring tool, with Grafana for visualizations. Prometheus’ support for exposing status to legacy tools like Nagios means that most enterprises find the transition painless and rewarding.

- Centralized event logging is a well-established IT service in most enterprises. The next steps are instrumenting applications to produce structured events and debugging traces. With this data, a high-cardinality analysis tool will give your team deep insight into the experiences of individual users. Unfortunately, organizations that are not developing software in-house will not be able to take advantage of the latest advances in event analysis and application tracing. The key opportunity here is to push vendors to produce detailed, structured events across the IT stack.

- Many IT organizations are severely deficient in event/metric retention. When I was a security incident investigator, my heart always sank when we found the hacked organization only had the last week’s worth of logs. Sadly, the truth is that most security incidents take months, not weeks to discover. Your organization should be able to look back through application and infrastructure event logs for at least the past year.

Object Storage

Databases have been around for more than 50 years. While we’re historically familiar with Relational Databases (1970), the cloud native ecosystem has brought us completely new types of databases: NoSQL, NewSQL, Document, Time-Series, and Colunm-Store, to name a few.

Object Storage is a type of cloud native database that enterprises can benefit from now. Far from a Relational Database, Object Storage systems are optimized for storing files. SQL isn’t supported — you store and fetch files based on a URL. Simplicity is its strength: Object Storage is supported by most modern analytics, security, and data science tools.

Although AWS has had S3 object storage since 2006, Google and Azure recently built very competitive offerings. All of these hosted Object Storage services are protocol-compatible, so they are somewhat of a commodity [data gravity aside]. Likely, some department in your enterprise is already using S3 today (marketing, data science, IT, etc.).

DANGER: Many organizations have exposed sensitive information via misconfigured AWS S3 and GCP GCS buckets recently. You MUST have a solid “Cloud Object Storage” security policy automated monitoring in place before storing non-public information in the cloud.

Many enterprises will find performance and security improvements by hosting Object Storage on-prem. For those that want a self-hosted solution, I recommend MinIO. While there are several commercial, self-hosted, S3-compatible products [1][2][3], in this case, the open source choice is best:

Tread With Caution

I’m convinced that the tools above hold tremendous opportunity for every enterprise. They provide significant gains, and a pilot can be run quickly without staff training or forklift technology replacement.

Despite the risk of Twitter backlash, there are two popular cloud native tools that I would recommend enterprises avoid rushing to adopt in 2020: Kubernetes and Serverless.

Kubernetes and containerization are very powerful abstractions. They make a natural evolution in the datacenter after Infrastructure as Code is in place. However, these tools require tight collaboration between Dev and Ops teams and are a rough fit for Enterprises with legacy software.

Serverless promises to liberate IT from server provisioning, patching, and maintenance. Unfortunately, it generally requires re-writing applications from the ground up. While Serverless may be worth investigating for green-field projects, it’s a bad fit for in-production Enterprise applications.

Seize This Opportunity!!

Yes — these tools are awesome technical enablers that will yield real operational and capital savings! But that’s not even the best thing about them:

Cloud native tooling can help to foster powerful collaboration between Dev and Ops teams. These tools all provide better visibility across the organization, create agility for rapid response, and improve safety by lowering the risk of changes.

Consider these cloud native tools in your 2020 plans!

Evi Nemeth used to say that open source software is “free, like a box of puppies.” Even with no licensing cost, you’re going to be taking care of the thing and picking up after it. As you explore open source in your organization, treat it like “real” software: allocate the resources you would to any commercial software deployment project.

Looking for help evaluating cloud native tools in your enterprise? I'm focused where software, infrastructure, and data meets security, operations, and performance. Follow or DM me on twitter at @nedmcclain.